This is what will be so sad for the future. I though art was dead years ago, but it’s truly dead now

The analogy sucks because it implies AI is in any way beneficial.

Generative AI or AI in general?

Because AI has tons of uses that are actually very beneficial.

AI is a misnomer anyhow. It’s been pretty definitively proven that these machines aren’t using intelligence of any kind, just really efficient search algorithms that are designed to smash things together in a way that looks like something resembling human speech and human made art. But that is the absolute limit of their ability and their potential.

It’s been pretty definitively proven that these machines aren’t using intelligence.

You would have to be able to concretely define what "intelligence " is first before you can do that.

just really efficient search algorithms that are designed to smash things together in a way that looks like something resembling human speech and human made art.

That’s an OK analogy for basic LLMs but that’s not at all how stable diffusion works.

But that is the absolute limit of their ability and their potential.

Not at all. AI models are constantly developing. Compared to even a year ago they’re so much more advanced and there’s no reason to believe we’ve hit any sort of peak. And from what I can from my freinds that are still in Acedemia and places like deep mind, there are some truly groundbreaking changes coming pretty soon.

BTW, don’t get me wrong. These things have their uses. It’s just that their misrepresentation as “AI” is causing people to put them in a category they do not fit in. True AI or AGI will not be achieved through these methods. But these GANs have uses and if marketed the way they should be, they could lead to some terrific innovation.

But the way they’re being marketed right now is just a lot of hype whose only goal is to squeeze money out of investors and those gullible enough to believe that this is more than it is. And in the meantime they’re going to do a fuck ton of damage by stealing the artwork of people who actually have artistic talent thereby taking money out of those creative people’s pockets and the LLMs that are being wantonly plugged into search engines are outputting misinformation all over the place while enforcing a lazy attitude toward research and information gathering.

You would have to be able to concretely define what "intelligence " is first before you can do that.

The Turing test is not a valid determiner because the thought process behind it is 80 years out of date. Intelligence has to be capable of actually processing data on its own without external sources to draw from. Otherwise it’s just copying and pasting in approximation of language and art. See article below:

https://www.techradar.com/news/calm-down-folks-chatgpt-isnt-actually-an-artificial-intelligence

As the article states, these things should be called GANs but that doesn’t sound as sexy as AI.

This goes for Stable Diffusion as well as every other generative art program in existence.

Not at all. AI models are constantly developing. Compared to even a year ago they’re so much more advanced and there’s no reason to believe we’ve hit any sort of peak. And from what I can from my freinds that are still in Acedemia and places like deep mind, there are some truly groundbreaking changes coming pretty soon.

It’s all hype in order to get investment dollars. These will never become AGI or anything close. They don’t “think”, they simply search their databases based on inputs and then output the closest approximation of what the user is asking for. It’s why AI art always looks wonky and LLMs output made up garbage.

Why do people act like generative AI has no uses? AlphaFold2 was a generational leap in protein folding. Alphatensor was used to find previously unknown sparse matrix multiplication algorithms. These are all examples of generative ai being useful on a societal scale.

True, I just didn’t want to get crucified on here.

oh lol just saw the community name

A very small example: First Person Shooters and other action games would be very boring without enemy AI.

Don’t get confused here. That kind of “AI” has nothing to do with the AI we’re talking about here. We had NPCs decades ago in games when AI wasn’t even a thing yet.

That’s what the person I was replying to was asking. NPC scripting is still a form of AI and can get pretty advanced (Alien: Isolation).

When you’re talking about Generative AI it’s important to specify, because there are legitimate forms of AI that aren’t intended to steal jobs.

NPC scripting is still a form of AI

Absolutely not. Not even remotely close. NPC scripting follows a pattern, and no matter how advanced and complex you made these algorithms, that’s all they are - algorithms.

AI is actually “learning”, as in it needs material to get good at something.

Those are two completely different things. The only thing they have in common is that they are both ran on a computer.

You should be able to understand from the context of this post.

Yeah it’s a bad analogy because aimbots actually get good results.

But they also suck out the joy and soul of games, so it’s pretty apt.

Depends, honestly. I think for solo indie devs, AI is going to be huge because they can create high-quality assets like models, terrain or music without having to learn all the individual programs and skills which could take another couple of years, and if we’re honest - if you don’t propagate that you used AI for assets, barely anyone will notice it.

Until a few years from now, when someone can just describe the exact game they want and it’s just poofed into existence with no human intervention and everyone is just playing their own isolated AI generated slop.

First of all, it’s gonna take a while until we’re at the point that games are just “poofed” into existence. AI can barely generate mods for existing games, let alone full games.

And second - well, yes. If it’s good slop, it’s not an issue tho?

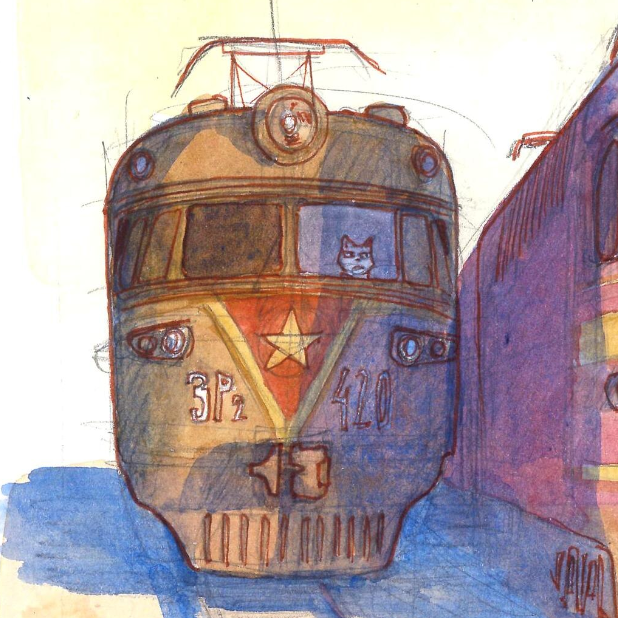

this is clear proof that AI art is soulless and real artists will always outperform AI

What about the very famous equivalent that happened like a year ago where someone won an art competition with an AI generated photo?

poor judges I suppose

deleted by creator

As long as progress continues and humanity survives, computer generated art will eventually outperform humans. It’s pretty obvious, as far as science knows you could just simulate a full human consciousness and pull images out of that somehow, but able to run that in parallel, never deteriorating, never tiring. It’s not a matter of if “AI” can outperform humans, it’s a matter of if humanity will survive to see that and how long it might take.

Explain art performance, chief.

It’s not a matter of if “AI” can outperform humans, it’s a matter of if humanity will survive to see that and how long it might take.

You are not judging what is here. The tech you speak of, that will surpass humans, does not exist. You are making up a Sci-Fi fantasy and acting like it is real. You could say it may perhaps, at some point, exist. At that point we might as well start talking about all sorts of other technically possible Sci-Fi technology which does not exist beyond fictional media.

Also, would simulating a human and then forcing them to work non-stop count as slavery? It would. You are advocating for the creation of synthetic slaves… But we should save moral judgement for when that technology is actually in horizon.

AI is a bad term because when people hear it they start imagining things that don’t exist, and start operating in the imaginary, rather than what actually is here. Because what is here cannot go beyond what is already there, as is the nature of the minimization of the Loss Function.

The tech you speak of, that will surpass humans, does not exist. You are making up a Sci-Fi fantasy and acting like it is real.

The difference is, this isn’t a warp drive or a hologram, relying on physical principles that straight up don’t exist. This is a matter of coding a good enough neuron simulation, running it on a powerful enough computer, with a brain scan we would somehow have to get - and I feel like the brain scan is the part that is farthest off from reality.

You are advocating for the creation of synthetic slaves…

That’s an unnecessary insult - I’m not advocating for that, I’m stating it’s theoretically possible according to our knowledge, and would be an example of a computer surpassing a human in art creation. Whether the simulation is a person with rights or not would be a hell of a discussion indeed.

I do also want to clarify that I’m not claiming the current model architectures will scale to that, or that it will happen within my lifetime. It just seems ridiculous for people to claim that “AI will never be better than a human”, because that’s a ridiculous claim to have about what is, to our current understanding, just a computation problem.

And if humans, with our evolved fleshy brains that do all kinds of other things can make art, it’s ridiculous to claim that a specially designed powerful computation unit cannot surpass that.

This is a matter of coding a good enough neuron simulation, running it on a powerful enough computer, with a brain scan we would somehow have to get - and I feel like the brain scan is the part that is farthest off from reality.

So… Sci-Fi technology that does not exist. You think the “Neurons” in the Neural Networks of today are actually neuron simulations? Not by a long shot! They are not even trying to be. “Neuron” in this context means “thing that holds a number from 0 to 1”. That is it. There is nothing else.

That’s an unnecessary insult - I’m not advocating for that, I’m stating it’s theoretically possible according to our knowledge, and would be an example of a computer surpassing a human in art creation. Whether the simulation is a person with rights or not would be a hell of a discussion indeed.

Sorry about the insulting tone.

I do also want to clarify that I’m not claiming the current model architectures will scale to that, or that it will happen within my lifetime. It just seems ridiculous for people to claim that “AI will never be better than a human”, because that’s a ridiculous claim to have about what is, to our current understanding, just a computation problem.

That is the reason why I hate the term “AI”. You never know whether the person using it means “Machine Learning Technologies we have today” or “Potential technology which might exist in the future”.

And if humans, with our evolved fleshy brains that do all kinds of other things can make art, it’s ridiculous to claim that a specially designed powerful computation unit cannot surpass that.

Yeah… you know not every problem is compute-able right? This is known as the halting problem.

Also, I’m not interested in discussing Sci-Fi future tech. At that point we might as well be talking about Unicorns, since it is theoretically possible for future us to genetically modify a equine an give it on horn on the forehead.

Also, why would you want such a machine anyways?

Also, why would you want such a machine anyways?

Also, why would you want such a machine anyways?

People seem to be assuming that… But no, it’s not that I want it, it’s that, as far as I can tell, there’s no going back. The first iterations of the technology are here, and it’s only going to progress from here. The whole thing might flop, our models might turn out useless in the long run, but people will continue developing things and improving it. It doesn’t matter what I want, somebody is gonna do that.

I know neurons in neural networks aren’t like real neurons, don’t worry, though it’s also not literally just “holds a number from 0 to 1”, that’s oversimplifying a bit - it is inspired by actual neurons, in the way that they have a lot of connections that are tweaked bit by bit to match patterns. No idea if we might need a more advanced fundamental model to build on soon, but so far they’re already doing incredible things.

That is the reason why I hate the term “AI”.

I don’t quite share the hatred, but I agree. The meaning stretches all the way to NPC behavior in games. Not long ago things like neural network face and text recognition were exciting “AI”, but now that’s been dropped and the word has new meanings.

Yeah… you know not every problem is compute-able right?

Yup, but that applies to our brains same as it does for computers. We can’t know if a program will halt any more than a computer can - we just have good heuristics based on understanding of code. This isn’t a problem of computer design or fuzzy logic or something, it’s a universal mathematical incomputability, so I don’t think it matters here.

In this sense, anything that a human can think up could be reproduced by a computer, since if we can compute it, so could a program.

At that point we might as well be talking about Unicorns

Sure, we absolutely could talk about unicorns, and could make unicorns, if we ignore the whole whimsical magical side they tend to have in stories 😛

I don’t think anything I’m saying is far off in the realm of science fiction, I feel like we don’t need anything unrealistic, like new superconductors or amazing power supplies, just time to refine the hardware and software on par with current technology. It’s scary, but I do hope either the law catches up before things progress too far or, frankly, a major breakthrough doesn’t happen in my lifetime.

Edit: Right, I also didn’t fit that in my reply - thanks for being civil, some people seem to go straight to mocking me for believing things they made up because I’m not sitting in the bandwagon of “it’ll never happen”, it’s pretty depressing how the discourse is divided into complete extremes

No, because the best art isn’t measured in skill, but in relevance to lived experiences

Until you can upload a bunch of brains and simulate them in full you can’t capture that experience accurately, and you’ll still have a hard time keeping it up to date

No, he’s got a point. AI already scores, on average, at least 8.7 kilo-arts on the quantitative art scale we all use already (and have, ever since the Renaissance gave us all that pesky realism), and line always go up, as we know.

Not sure if I need the /s but here it is.

Tell me you don’t understand how generative AI works.

As long as progress continues and humanity survives, computer generated art will eventually outperform humans. It’s pretty obvious, as far as science knows you could just simulate a full human consciousness and pull images out of that somehow, but able to run that in parallel, never deteriorating, never tiring. It’s not a matter of if “AI” can outperform humans, it’s a matter of if humanity will survive to see that and how long it might take.

That did it!

Tell me you don’t understand how generative AI works.

Current generation generative AI is mapping patterns in images to tokens in text description, creating a model that reproduces those patterns given different combinations of input tokens. I don’t know the finer details of how the actual models are structured… But it doesn’t really matter, because if human brains can create something, there’s nothing stopping a sufficiently advanced computer and program from recreating the same process.

We’re not there, not by a long shot, but if we continue developing more computational power, it seems inevitable that we will reach that point one day.

Real regrettable take, come back in 5 years for a nice snack

To the many, many, downvoters…you’re completely insane if you think AI art which has been a thing for like 18 months won’t improve to the point that it’s better than flesh bag artists ever.

You clearly don’t understand how these things work. AI gen is entirely dependent on human artists to create stuff for it to generate from. It can only ever try to be as good as the data sets that it uses to create its algorithm. It’s not creating art. It’s outputting a statistical array based on your keywords. This is also why ChatGPT can get math questions wrong. Because it’s not doing calculations, which computers are really good at. It’s generating a statistical array and averaging out from what its data set says should come next. And it’s why training AI on AI art creates a cascading failure that corrupts the LLM. Because errors from the input become ingrained into the data set, and future errors compound on those previous errors.

Just like with video game graphics attempting to be realistic, there’s effectively an upper limit on what these things can generate. As you approach a 1:1 approximation of the source material, hardware requirements to improve will increase exponentially and improvements will decrease exponentially. The jump between PS1 and PS2 graphics was gigantic, while the jump between PS4 and PS5 was nowhere near as big, but the differences in hardware between the PS1 and PS2 look tiny today. We used to marvel at the concept that anybody would ever need more than 256MB of RAM. Today I have 16GB and I just saw a game that had 32GB in its recommended hardware.

To be “better” than people at creating art, it would have to be based on an entirely different technology that doesn’t exist yet. Besides, art isn’t a product that can be defined in terms of quality. You can’t be better at anime than everybody else. There’s always going to be someone who likes shit-tier anime, and there’s always going to be parents who like their 4 year old’s drawing better than anything done by Picasso. That’s why it’s on the fridge.

So your argument, if I’m understanding it correctly, is that:

- You believe the model of polygon-based rendering in video games has diminishing returns. No argument. Not sure what this has to do with the generated art which doesn’t have similar constraints and doesn’t work the same way.

- Art is subjective, so calling something better or worse is pointless. Also no argument, this is why it’s absolutely ridiculous for people to be saying all AI generated art is universally bad. It has its purpose in the same way caricature “artists” in European historical districts have a purpose…in theory.

It sounds like we’re on the same page, but you have a reason (which you’ve been unable to coherently represent) you think AI generated art will never improve to the point of being good.

AI art isn’t bad because of its inherent quality (though tons of it is poor quality), it’s bad because it both lacks the essential qualities that people appreciate about art, and because of the ethics around the companies and the models that they’re making (as well as the attitude of some of the people who use it).

AI has no concept of the technical concepts behind art, which is a skill people appreciate in terms of “quality,” and it lacks “intent.” Art is made for the fun of it, but also with an intrinsic purpose that AI can’t replicate. AI is just a fancy version of a meme template. To quote Bennett Foddy:

For years now, people have been predicting that games would soon be made out of prefabricated objects, bought in a store and assembled into a world. And for the most part that hasn’t happened, because the objects in the store are trash. I don’t mean that they look bad or that they’re badly made, although a lot of them are - I mean that they’re trash in the way that food becomes trash as soon as you put it in a sink. Things are made to be consumed and used in a certain context, and once the moment is gone, they transform into garbage.

Adam Savage had a good comment on AI in one of his videos where he said something like “I have no interest in AI art because when I look at a piece of art, I care about the creator’s intent, the effort that they put into the piece, and what they wanted to say. And when I look at AI, I see none of that. I’m sure that one day, some college film student will make something amazing with AI, and Hollywood will regurgitate it until it’s trash.”

But that’s outside the context of your original post, in which you said that AI art would someday be better than what humans can make. And this is where my point about video game graphics comes in. AI is replicating the art in its training set, much like computer graphics seeking realism are attempting to replicate the real world. There’s no way to surpass this limit with the technology that powers these LLMs, and the closer they get to perfectly mimicking their data and removing the errors that are so common to AI (like the six fingers, strange melty lines, lack of clear light sources, 60% accuracy rate with AI like ChatGPT, etc.), the more their power requirements will increase and the more incremental the advancements will become. We’re in the early days of AI, and the advancements are rapid and large, but that will slow down and the hardware requirements and data requirements are already on a massive scale to the tune of the entirety of the internet for ChatGPT and its competitors.

AI has no concept of the technical concepts behind art, which is a skill people appreciate in terms of “quality,” and it lacks “intent.” Art is made for the fun of it, but also with an intrinsic purpose that AI can’t replicate.

I generally agree with you, AI can’t create art specifically because it lacks intent, but: The person wielding the AI can very much have intent. The reason so much AI stuff is slop is the same reason that most photographs are slop: The human using the machine doesn’t care to and/or does not have the artistic wherewithal to elevate the product to the level of art.

Is this at the level of the artstation or deviantart feeds? Hell no. But calling it all bad, all slop, because it happens to be AI doesn’t give the people behind it justice.

(Also that’s the civitai.green feed sorted by most reactions, not the civitai.com feed sorted by newest. Mindless deluge of dicks and tits, tits and dicks, that one).

AI is replicating the art in its training set

That’s a bit reductive: It very much is able of abstracting over concepts and of banging them against each other. Interesting things are found at the fringes, at the intersections, not on the well-trodden paths. An artist will immediately spot that and try to push a model to its breaking point, ideally multiple breaking points simultaneously, but for that the stars have to align: The user has to be a) an artist and b) willing to use AI. Or at least give it a honest spin.

That fundamentally assumes the exact model used today for, and let’s be clear on this, picking a 16-bit integer, will never be improved upon. It also assumes that even though humans are able to slap two things together and sometimes, often by accident, make it better than the sum of its parts…a machine manipulating integers cannot do the same. It is fundamentally impossible for ai to synthesize anything…except that’s exactly what it’s doing.

There seems to be some other argument going on up above which is about how the actual computation itself can’t compete but that also doesn’t hold water. Ok, a computer is xor-ing things rather than chemical juice and action potentials. But that’s super low level. What it sure appears to be doing is taking things it’s seen as input data and generating variations on that…which humans also do. All art is theft. I just listened to a podcast from an author saying they hadn’t realized this children’s book from when they were 8 impacted their story design when they were 35, and yet now that they’ve reread it they immediately see that they basically stole pieces from the children’s story wholesale.

Finally you have this intent thing in here. Can’t argue that at present there isn’t an intent. But that has never before been a restriction on whether something is art. Plenty of soulless trash is called art. Why is the fruit bowl considered art? But even past that there’s an entirely opposing view where you shouldn’t care what the author thought, making something. What matters is what you think, consuming that thing. If I look at an AI drawing and it sparks some emotional resonance, who is anyone else to say it isn’t important art to me?

I’m not going to argue that there are no issues with ai art today or that the quality is low, but folks in the Lemmy echo chamber are putting human-produced art in an inconceivably high pedestal that cannot possibly stand the test of time.

Their explanation was wasted on useless people like you.

improve to the point of being good.

So… first you say that art is subjective, then you say that a given piece can be classified as “good” or “bad”. What is it?

Your whole shebang is that it [GenAI] will become better. But, if you believe art to be subjective, how could you say the output of a GenAI is improving? How could you objectively determine if the function is getting better? The function’s definition of success is it’s loss function, which all but a measure of how mismatched the input of a given description is to it’s corresponding image. So, how well it copies the database.

Also, an image is “good” by what standards?

Why are you so obsessed with the image looking “good”. There is a whole lot more to an image than just “does it look good”. Why are you so afraid of making something “bad”? Why can you not look at an image any deeper than “I like it.”/“I do not like it.”, “It looks professional”/“It looks amateurish”? These aren’t meaningful critiques of the piece, they’re just reports of your own feelings. To critique a piece, one must try to look at what one believes the piece is trying to accomplish, then evaluate whether or not the piece is succeeding at it. If it is, why? If it isn’t, why not?

Also, these number networks suffer from diminishing returns.

Also:

In the context of Machine Learning “Neuron” means “Number from 0 to 1” and “Learning” means “Minimize the value of the Loss Function”.

Youve gone off the rails here, I don’t know what argument you’re trying to make.

Looks like op used the phrasing “outperform” but that has the same definition problems.

In any case the argument I’m making is simple

For a given claim “computers will never ‘outperform’ humans at X” I need you to prove to me that there is a fundamental physical limitation that silicon computing machines have that human computing machines dont. You can make ‘outperform’ mean whatever, same fundamental issue.

You have stated that AI will improve. Improvement implies being able to classify something as better than something else. You have then stated that art is subjective and therefore a given piece cannot be classified as better than another. This is a logical contradiction.

I then questioned your standards for “good”. By what criteria are you measuring the pieces in order to determine which one is “better” and thus be able to determine if the AI’s input is improving or not? I then tried to, as simply and as timely as I could, give a basic explanation of how the Learning process actually works. Admittedly I did not do a good job. Explanations of this could take up to two or three hours, depending on how much you already know.

Then comes some philosophizing about what makes a piece “good”. First, questioning your focus on the pieces of output being good. Then, inquiring what is the harm of a “bad” image? In the context of “Why not draw yourself? Too afraid of making something that is not «perfect»”? Then I asked why is it that you refuse, on your analisys of the “goodness” of an image, to go beyond “I like it.”/“I do not like it.”, “It looks professional”/“It looks amateurish”. Such statements are not meaningful critiques of a piece, they are reports of the feelings of the observer. The subjectivity of art we all speak of. However, it is indeed possible to create a more objective critique of a piece which goes beyond our tastes. To critique a piece, one must try to look at what one believes the piece is trying to accomplish, then evaluate whether or not the piece is succeeding at it. If it is, why? If it isn’t, why not?

Then, as an addendum, I stated that these functions we call AI have diminishing returns. This is a consequence of the whole loss function thing which is at the heart of the Machine Learning process.

The some deceitful definitions. The words “Neuron” and “Learning” under the context of Machine Learning do not have the same meaning as they do colloquially. This is something which causes many to be fooled and marketing agencies abuse to market “AI”. Neuron does not mean “simulation of biological neuron”, it means “Number from 0 to 1”. That means that a Neural Network is actually just a network of numbers between 0 and 1, like 0.2031. Likewise, learning in Machine Learning is not the same has biological learning. Learning here is just a short hand for Minimizing the value of the Loss Function”.

I could add that even the name AI is deceitful, has it has been used as a marketing buss word since it’s creation. Arguably, one could say it was created to be one. It causes people to judge the Function, not for what it is, as any reasonable actor would, but for what it isn’t. Instead judged by what, maybe, it might become, if only we [AI companies] get more funding. This is nothing new. The same thing happened in the first AI craze in the 19’s. Eventually people realized the promised improvements were not coming and the hype and funding subsided. Now the cycle repeats: They found something which can superficially be considered “intelligent” and are now doing it again.

flesh bag artists ever

Dehumanization. Great. What did the artists do for you to have them this much?

Also, do you have any idea of how back propagation works? Probably never heard of it, right?

We’re all flesh bags, what are you talking about? Explain to me how you are not a bag full of flesh (technically a flesh donut, if you consider the sphincters).

I’ve heard of the neural net back propagation, but I’ve just now learned that it’s called that based on flesh bag neural nets. What about it?

We’re all flesh bags, what are you talking about?

So, in your eyes, all humans are but flesh with no greater properties beyond the flesh that makes up part of them? In your eyes, people are just flesh?

based on flesh bag neural nets

That is false. Back propagation is not based on how brains work, it is simply a method to minimize the value of a loss function. That is what “Learning” means in AI. It does not mean learn in the traditional sense, it means minimize the value of the loss function. But what is the loss function? For Image Gen, it is, quite literally, how different the output is from the database.

The whole “It’s works like brains do” is nothing more than a loose analogy taken too far by people who know nothing about neurology. The source of that analogy is the phrase “Neurons that fire together wire together”, which comes with a million asterisks attached. Of course, those who know nothing about neurology don’t care.

The machine is provided with billions of images with accompanying text descriptions (Written by who?). You the input the description of one of the images and then figure out a way to change the network so that when the description is inputed, it’s output will match, as closely as possible, the accompanying image. Repeat the process for every image and you have a GenAI function. The closer the output is to the provided data, the lower the loss function’s value.

You probably don’t know what any of that is. Perhaps you should educate yourself on what it is you are advocating for. 3Blue1Brown made a great playlist explaining it all. Link here.

Correct, humans are flesh bags. Prove me wrong?

I’m not sure what the rest of the message has to do with the fundamental assertion that ai will never, for the entire future of the human race, “outperform” a human artist. It seems like it’s mostly geared towards telling me I’m dumb.

I’m not sure what the rest of the message has to do with the fundamental assertion that ai will never, for the entire future of the human race, “outperform” a human artist. It seems like it’s mostly geared towards telling me I’m dumb.

I is my attempt at an explanation of how the machine fundamentally works, which, as an obvious consequence of it’s nature, cannot but mimic. I’m pretty sure you do not know the inner workings of the “Learning”, so yes… I’m calling you incompetent… in the field of Machine Learning. I even gave you a link to a great in depth explanation of how these machines work! Educate yourself, as for your ignorance (in this specific field) to vanish.

Correct, humans are flesh bags. Prove me wrong?

-

Human is "A member of the primate genus Homo, especially a member of the species Homo sapiens, distinguished from other apes by a large brain and the capacity for speech. "

-

Flesh is "The soft tissue of the body of a vertebrate, covering the bones and consisting mainly of skeletal muscle and fat. "

-

Flesh does not have brains or the capacity for speech

-

Therefore, Humans are not flesh

I supposed I should stop wasting my time talking to you then, as you see me as nothing more than an inanimate object with no consciousness or thoughts, as is flesh.

-

It’s proof of nothing really. Just because a drawn picture won once means squat. Also, AI can be used alongside drawing - for references for instance. It’s a tool like any other. Once you start using it in shit ways, it results in shit art. Not to say it doesn"t have room to improve tho

Also, imagine if the situation were reversed and an AI drawing was entered instead to a drawing contest. People would be livid, instead of celebrating breaking the rules.

Also, imagine if the situation were reversed and an AI drawing was entered instead to a drawing contest. People would be livid, instead of celebrating breaking the rules.

Except that already happened, and people were livid. Your correct assessment of such a scenario says a lot more than your half hearted defences for AI art.

Wat?

Yeah, so people were pissed off when AI art has been entered into a drawing contest, why are people celebrating someone cheating and putting a drawing into an AI contest?

The only people I’ve ever heard say AI is good for “references” are people who aren’t artists.

Because AI makes for LOUSY references. (Unless your art style specifically involves clothing pieces melding into each other without rhyme or reasons and cthonic horrors for hands and limbs.)

Just because a drawn picture won once means squat

True, a sample of one means nothing, statistically speaking.

AI can be used alongside drawing

Why would I want a function drawing for me if I’m trying to draw myself? In what step of the process would it make sense to use?

for references for instance

AI is notorious for not giving the details someone would pick a reference image for. Linkie

It’s a tool like any other

No they are not “a tool like any other”. I do not understand how you could see going from drawing on a piece of paper to drawing much the same way on a screen as equivalent as to an auto complete function operated by typing words on one or two prompt boxes and adjusting a bunch of knobs.

Also, just out of curiosity, do you know how “back propagation” is, in the context of Machine Learning? And “Neuron” and “Learning”?

No they are not “a tool like any other”. I do not understand how you could see going from drawing on a piece of paper to drawing much the same way on a screen as equivalent as to an auto complete function operated by typing words on one or two prompt boxes and adjusting a bunch of knobs.

I don’t do this personally but I know of wildlife photographers who use AI to basically help visualize what type of photo they’re trying to take (so effectively using it to help with planning) and then go out and try and capture that photo. It’s very much a tool in that case.

What a stupid fucking idea for a contest. “Press a button until something interesting pops out. Best button pusher wins.” Glad it got subverted like that.

Yeah man, I hate photography contests too.

I’ll sorry you lack creativity and understanding.

I’m also a traditional artist and do some photography as well. But by all means attack me personally because I don’t agree with you.

Tell me you know nothing about photography without using those words.

I mean - I do some professional underwater photography, and sometimes it can feel like that. If 1/20 of my photos are keepers, I’m doing pretty good.

Of course getting it to the point where I can shoot 5-shot bursts and pick the good one still requires a lot of knowledge of optics, lighting, camera settings, etc and a ton of editing on the back end, but in the moment it kinda do be like that.

I’m strictly an amateur snapshot taker. So I’m lucky if 1 of 100 photos are things I consider “good”.

This is why I respect people who can reach 1 in 10 keepers. They know things I don’t understand.

You seem like you’re fun at parties

An AI generated contest… thats the most low effort contest ever. Glad this person did what they did and used real skill.

Like a book recommendation contest

even a book recommendation contest could only be this dumb if the only way to participate was to shout out random words and hope it would coincide with a good book title

Concur. I would actually like a book rec event

I highly recommend Schachnovelle by Stefan Zweig

It’s the opposite of the OP’s headline.

Aimbot works because being good at games is essentially bending your skills to match a simulation, aimbot can have the simulation parameters written into it.

LLMs are blenders for human-made content with zero understanding of why some art resonates and other art doesn’t. A human with decent skill will always outperform a LLM because the human knows what the ineffable qualities are that make a piece of art resonate.

100% yes but just because I really hate how everyone conflates AI with LLMs these days I have to say this: The LLM isn’t generating the image, it’s at most generating a prompt for an image generating AI (which you could also write yourself)

psa, you get more control this way: instead of asking an LLM to generate an image, you can just say “generate an image with this prompt: …”

idiotic of course that that’s necessary. it’s the kind of thing openai does – you’d think they’d provide “open” access to dall-e.

or just use a diffusion model locally.

It’s not useful to talk about the content that LLMs create in terms of whether they “understand it” or don’t. How can you verify if an LLM understands what it’s producing or not? Do you think it’s possible that some future technology might have this understanding? Do humans understand everything they produce? (A lot of people get pretty far by bullshitting.)

Shouldn’t your argument equally apply to aimbots? After all, does an aimbot really understand the strategy, the game, the je-ne-sais-quoi of high-level play?

deleted by creator

What front end is this? Looks gorgeous

Is it not mono? It looks like mono.

Edit: oh I’m sleep deprived, I thought you said font.

I appreciate the enthusiasm

Nitter

Same one that I used to link to the source https://xcancel.com/

Haha, humans taking over AI jobs!

He’s a modern day John Henry.

Somehow they invented a contest that’s more boring than watching paint dry.

I’m shocked i haven’t seen captchas of the form “choose which image is AI generated”

Instead they give you 9 AI Generated Images that kinda sorta look like the thing you’re supposed to click on.

People who use Lemmy would be able to tell the difference most of the time, but the average person would have zero idea.

Just look at any of the YouTube videos with obviously AI generated clickbait thumbnails that get 10s of millions of views. Or all of the shitty obvious Photoshop thumbnails that existed before AI.

No, nope they wouldn’t. Generally speaking when I explain why something posted here is AI, I get upvoted, when I explain why something is unlikely to be AI, I get at best controversial votes, while next to me a post with the equivalent of “I can tell by the pixels” is getting upvoted. It’s very rare for someone to chime in and actually discuss the case in an exploratory manner.

There’s already plenty of drama within the artist community over false AI allegations. Accusations from people who should know better than accuse someone of using AI because “the shading is too good while the hands are too bad”. Why the hell would lemmy be better at this than artist twitter.

Here’s a quick intro on how to spot AI art, and, crucially, how not to.

Friend, I am talking about pictures that look like this:

Which was sent to me by someone, along with a bunch of other similar images, by someone who thought it was a real photo.

I am talking about thumbnails generated by early DALL-E, where people’s faces are melting.

Yes everyone on lemmy is both mentally and physically superior to the average “normie”, who is completely incapable of rational thought.

It’s more like people on Lemmy know what to look for and generally have a hatred of AI “art,” so they’re looking to spot it.

How much of it is confirmation bias though?

Exactly they are just smarter, their brains are more attuned to being right

finally someone who gets it

You cheated. Of course if you’re an artist, you’re going to make better art than AI.

I like the artwork, I approve of the message, and this gave me a chuckle.

But c’mon, like, it’s against the rules. If you are annoyed by AI art being submitted to human art contests, you should be annoyed by this too.

The first time I read about AI art being submitted to a human contest and winning, I thought, “how drôle.” Of course, now I see it violates the spirit of competition. AI art should have its own category – and that doesn’t just go one way. Like it or not, AI is a tool and if some people want to explore how to use it to make good content, let’s let them do that in peace. Maybe it will become fractionally less shitty.

Generated art isn’t art. Generative AI artists don’t exist. Calling it a tool implies it helps in the execution of a task when all it actually does is shit out slop based on stolen training data.

You should try the AI Art Turing Test! 50 “art” pieces, you have to decide whether they were human or AI. I got about 70% when I did this.

Actually I don’t have to be ok with anyone contributing to the burning down of the planet. I feel like adults should have a better understanding of morality than simply “its against the rules and is therefore wrong”.

I think most people don’t really develop moral reasoning past “I don’t want to get punished” or, if you’re lucky, “it’s against the rules.”

deleted by creator

No man what are you doing! We already used the “well actually it’s bad because uhhh climate change” argument against cryptocurrencies, you can’t double-dip like! When you hate on AI you’re supposed to use the “but it’s plagiarism” argument! Everyone knows that!

OK then

- It is bad for the climate

- Its plagiarism

- We are literally putting our means of expressing ourselves into the hands of a few people. So once they just decide that cats are now not allowed, no one will be able to create memes about cats

- You are fueling a machine that google is selling to the genocide in Gaza.

- You will never be able to create new things. Since an AI only has relatively few nodes that are random (so it can output different things when given the same input) let’s say about 10% of your picture is actually new, and the rest plagiarised. You could just input that again and make it more random, but inputting something AI generated into an AI just makes it shit itself, it will not be „random” like an artist thinks about making a new way of expressing themselves”,but random as in „let’s just expose a drive to extreme radiation and see what the data looks like after 1000 bitflips

- The data collection. If a normal human collected that much data about me I could just sue them for stalking

Just run your AI models locally? Problem solved lol.

So you want to buy me and every about like 10 million people a server that can run a halfway good LLM Model and pay the electric bills?

Also it dosent change any point except 2

There’s actually quite a few models that can run easily on a mid-range gaming computer (yes even for image generation, and yes they can run reasonably well) which…that same energy would probably be consumed by gaming so not really a huge difference.

Microsoft even just released an open weights LLM that runs entirely on CPU that is comparable to the big hosted ones people are paying for

Edit: just saw what community I’m in, so my comment was probably not appropriate for the community

The electric bills would not be high (per person) jsyk. It’d be comparable to playing a video game when in use.

So you want to buy me and every about like 10 million people a server that can run a halfway good LLM Model and pay the electric bills?

No, that sounds expensive

Also it dosent change any point except 2

It does actually

No, that sounds expensive

OK, so you just want everyone to just pull 20k out of their ass to buy themselves hardware to run a LLM on?

It does actually

Tell me how.

it’s like… yeah you can tweak every single parameter and build your own checkpoints and stack hundreds of extra networks on top of one another and that is certainly a skill, but creating art with intent is an entirely different skill. and the first one won’t give you shit if the contest is about creating art with intent.

But is the outcome important part, or which skill was required to make it