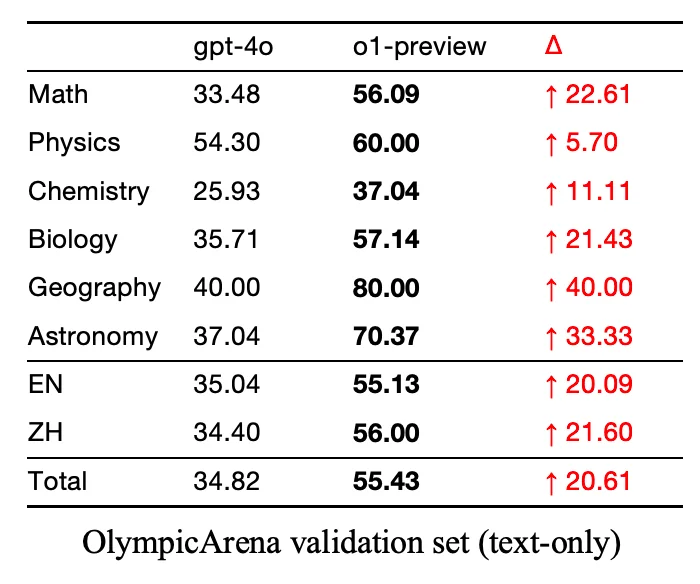

The jump from GPT-4o -> o1 (preview not full release) was a 20% cumulative knowledge jump. If that’s not an improvement in accuracy I’m not sure what is.

The jump from GPT-4o -> o1 (preview not full release) was a 20% cumulative knowledge jump. If that’s not an improvement in accuracy I’m not sure what is.

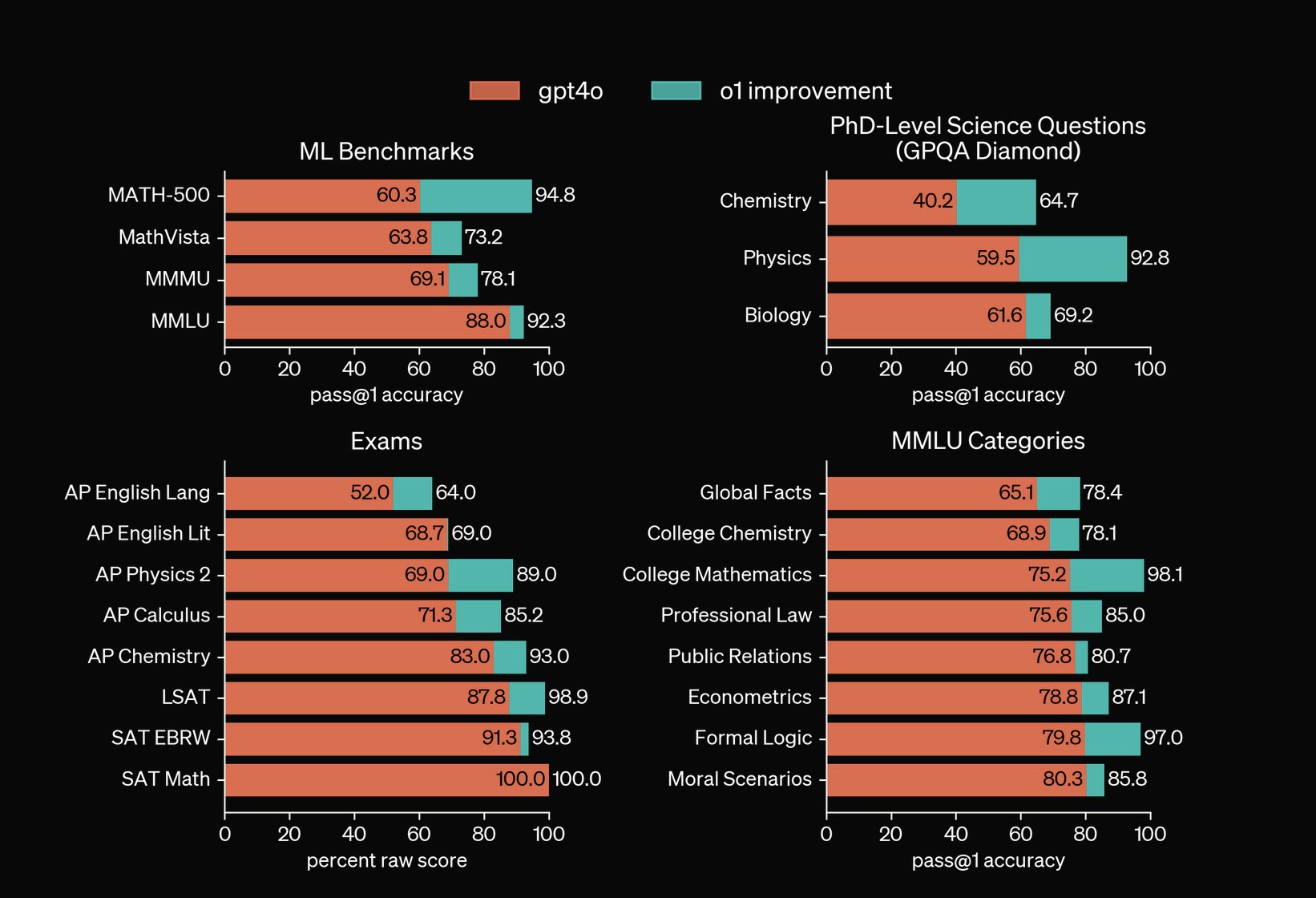

Compare the GPT increase from their V2 GPT4o model to their reasoning o1 preview model. The jumps from last years GPT 3.5 -> GPT 4 were also quite large. Secondly if you want to take OpenAI’s own research into account that’s in the second image.

Curious why your perspective is they’re are more of a scam when by all metrics they’ve only improved in accuracy?

Let’s not forget how will only buy electricity back at a variable yet, sell it at a static rate and keep the profit.

Also up charging a tax on selling electricity back into the grid for “use of their equipment”, which understandable i get but again c’mon.

The realistic progress needing to be done here is a battery storage solution as power needed during intense solar days is effectively 0% in California nowadays. We need to store that energy and use it during night but then it eats into PG&Es profits and we can’t have that can we?

Everything done under the guise of “progress” is helping a corporation somewhere.

nobody out there has come up with a good way to permanently archive all that stuff

Personally I can’t wait for these glass hard drives being researched to come at the consumer or even corporate level. Yes they’re only writable one time and read only after that, but I absolutely love the concept of being able to write my entire Plex server to a glass harddrive, plug it in and never have to sorry about it again.

Just another reason our election shouldn’t be entirely dependent on 10,000 people in Pennsylvania.

As much as people around these parts despise algorithmic feeds, I suspect an algorithmic feed would’ve worked far better in this situation to feed all academic based content to someone immediately on account creation if they show interest/ follow peers in the field.

This would’ve helped the migration since they most likely don’t know the accounts of the Twitter accounts posting academic content as that was algorithmically fed as well. I’m really doubtful it’s a problem with decentralization, seems to me mastodon had a problem with both not having a critical mass and the content that was there wasn’t easy enough to find.

I absolutely agree, im not necessarily one to say LLMs will become this incredible general intelligence level AIs. I’m really just disagreeing with people’s negative sentiment about them becoming worse / scams is not true at the moment.

Yeah only reason I didn’t include more is because it’s a pain in the ass pulling together multiple research papers / results over the span of GPT 2, 3, 3.5, 4, 01 etc.