The Fediverse is a great system for preventing bad actors from disrupting “real” human-human conversations, because all of the mods, developers and admins are all working out of a desire to connect people (as opposed to “trust and safety” teams more concerned about user retention).

Right now it seems that the Fediverses main protection is that it just isn’t a juicy enough target for wide scale spam and bad faith agenda pushers.

But assuming the Fediverse does grow to a significant scale, what (current or future) mechanisms are/could be in place to fend off a flood of AI slop that is hard to distinguish from human? Even the most committed instance admins can only do so much.

For example, I have a feeling all “good” instances in the near future will eventually have to turn on registration applications and only federate with other instances that do the same. But it’s not crazy to imagine that GPT could soon outmaneuver most registration questions which means registrations will only slow the growth of the problem but not manage it long-term.

Any thoughts on this topic?

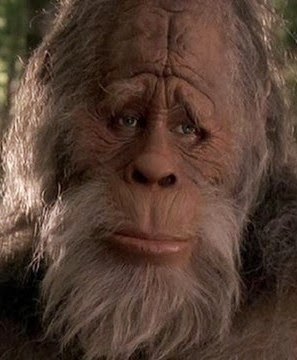

Reminds me of this one:

- source

What’s the incentive to operate an LLM on the fediverse that is truly helpful and not just trying to secretly sell something/push an agenda?

Well, I am not saying that the scenario is a perfect match, just that it reminded me of that:-).

Though to answer your question, if Reddit were all AI slop whereas we were not, then they would be foolish to not exploit (for moar profitz) the source of legitimately true info that could be useful to answer people’s questions, e.g. on topics such as whether and how to use Arch Linux btw. :-P

To train it to mimic genuine human behaviour for applications elsewhere.

Removed by mod

Removed by mod